Deep Generative Models

Research on improving and evaluating generative models, with focus on VAEs, GANs, and autoregressive models

This project encompasses research on deep generative models, focusing on improving their training dynamics, evaluation metrics, and applications to sequential data.

Information Theory & Generative Models

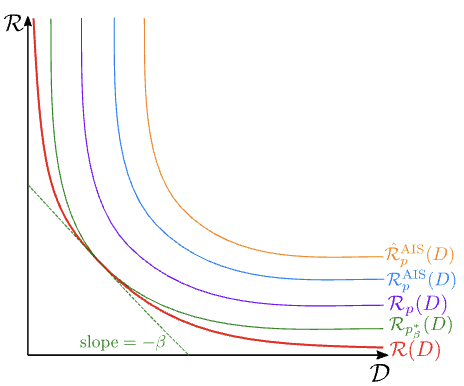

Evaluating Lossy Compression Rates of Deep Generative Models

We developed a novel framework for evaluating generative models through the lens of lossy compression, providing a principled way to measure model quality beyond traditional metrics.

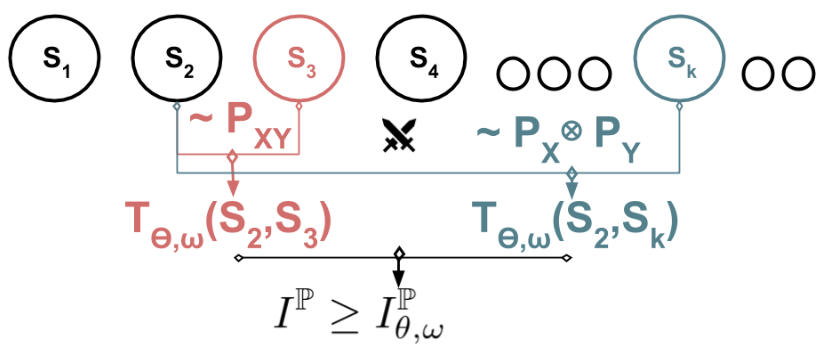

Better Long-Range Dependency By Bootstrapping A Mutual Information Regularizer

We developed a bootstrapping approach using mutual information regularization to improve the modeling of long-range dependencies in sequential models.

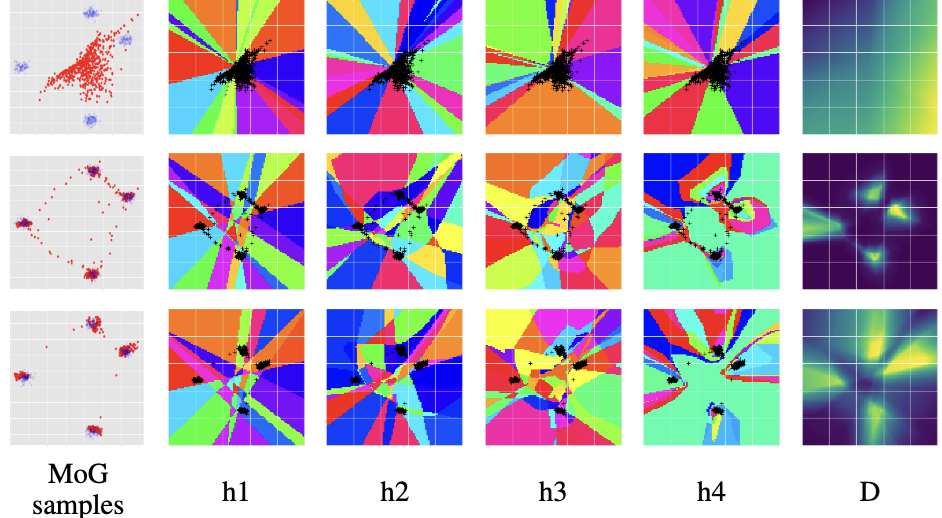

Improving GAN Training via Binarized Representation Entropy (BRE)

A novel regularization approach for GAN training that stabilizes learning by encouraging better capacity allocation in the discriminator through entropy maximization of binary activation patterns.

Sequential Models & VAEs

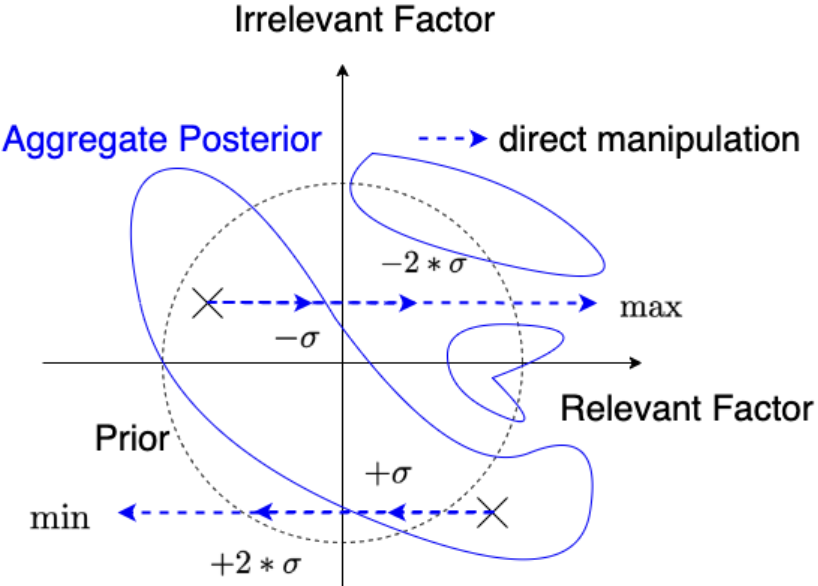

On Variational Learning of Controllable Representations for Text without Supervision

A framework for learning controllable text representations without explicit supervision, enabling better control over generated content while maintaining naturalness.

Preventing Posterior Collapse in Sequence VAEs with Pooling

We introduced a novel pooling mechanism for sequence VAEs that helps prevent the common problem of posterior collapse while maintaining model expressiveness.

Variational Hyper RNN for Sequence Modeling

A hierarchical RNN architecture with variational inference that better captures long-range dependencies and hierarchical structure in sequential data.

Impact

This research has advanced the field of generative modeling by:

- Developing novel evaluation metrics based on information theory

- Creating techniques to prevent common failure modes in VAEs

- Improving the modeling of long-range dependencies

- Enabling better control over generated content

- Providing theoretical insights into model behavior

The methods have been applied to various domains including text generation, sequence modeling, and image generation, contributing to both theoretical understanding and practical applications of deep generative models.